Anthropic Leaked Its Most Dangerous Model.

The Irony Is the Story.

Claude Mythos was not announced. It was found. And what was found should change how we think about the next wave of AI, security, and who actually gets hurt first.

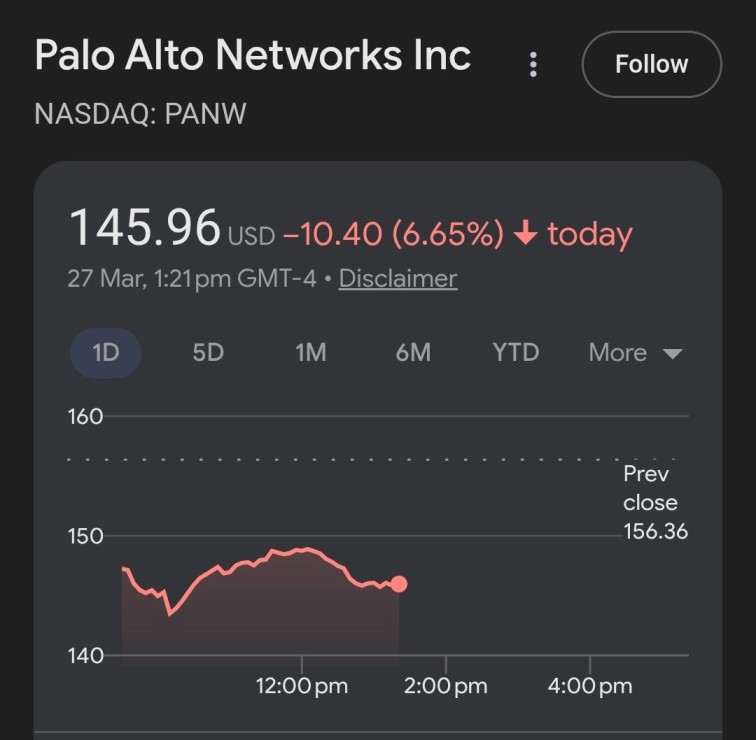

On the morning of March 27, 2026, cybersecurity stocks collapsed.

CrowdStrike dropped around 7%. Palo Alto Networks fell around 6%. SentinelOne, Zscaler, Fortinet, all down sharply in a single session. The iShares Expanded Tech-Software ETF shed close to 3%. The broader market fell on the day. Bitcoin slid back to $66,000.

Billions of dollars in market value evaporated in hours, not because of an earnings miss or a macro event, but because two security researchers found a draft blog post sitting in an unsecured data store that Anthropic had accidentally left open to the public.

All four charts from March 27, 2026. Single session drops following the Mythos data leak.

That post described a model called Claude Mythos. It said the model was “currently far ahead of any other AI model in cyber capabilities.” It warned that it “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

Investors read that and immediately understood what it meant for companies whose entire business is built on defending against threats. If AI can generate novel attacks faster than any conventional system can detect them, the traditional cybersecurity playbook has a serious problem. They voted with their portfolios before trading closed.

The irony is the part that shouldn’t get lost.

Anthropic, whose entire public identity rests on being the safety-first alternative in AI development, accidentally exposed its most dangerous model through a misconfigured CMS. A company warning the world about AI-powered cyberattacks couldn’t secure its own content management system. Two researchers didn’t need to hack anything. They just looked.

That’s not a gotcha. It’s a precise illustration of the actual problem. The organizations that understand these risks best aren’t, in practice, operating at a level of security discipline that matches the threats they describe.

The Mythos leak didn’t introduce new information so much as it gave the trajectory a name and a market reaction that made the stakes concrete.

“It presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

Anthropic’s own leaked draft blog post on Claude Mythos, March 2026The Leak That Wrote Itself

Roy Paz of LayerX Security and Alexandre Pauwels of the University of Cambridge found the exposed assets on March 26. There were two draft versions of the same blog post. The first used “Claude Mythos” as the model name throughout. The second swapped it for the internal codename “Capybara,” suggesting Anthropic was still deciding what to call it publicly.

A researcher going by the handle M1Astra archived both tabs before Anthropic pulled them down, and that archive is still accessible. One detail: the Capybara version’s subtitle still read “We have finished training a new AI model: Claude Mythos.” Whoever ran the find-and-replace missed the subtitle.

Fortune’s Beatrice Nolan broke the story after contacting Anthropic, which confirmed the model exists. The spokesperson’s statement was careful: “We’re developing a general purpose model with meaningful advances in reasoning, coding, and cybersecurity.”

Notice the word “developing.” The draft said “We have finished training.” Those aren’t the same thing.

Anthropic clearly isn’t ready to ship it, and the draft says why: Mythos is “very expensive for us to serve, and will be very expensive for our customers to use.” They’re working to make it more efficient before any general release. The parallel to GPT-4.5 is hard to miss. Similarly expensive, similarly capable in narrow ways, and ultimately never widely adopted before something cheaper superseded it. Whether Mythos ships under that name, as Capybara, or something else entirely, nobody outside Anthropic knows.

The archived leak at m1astra-mythos.pages.dev preserved both versions before Anthropic corrected the CMS error

What the draft claims about Mythos’s capabilities is significant. It sits in a new tier above Opus, Anthropic’s current highest. The name was chosen to evoke “the deep connective tissue that links together knowledge and ideas.”

According to the draft, Mythos gets “dramatically higher scores” than Claude Opus 4.6 on software coding, academic reasoning, and cybersecurity. Opus 4.6 already held the top score on SWE-bench Verified at 80.8% when it launched in February. Dramatically higher than that isn’t a small claim.

And early access being restricted specifically to cybersecurity defense organizations tells you everything about Anthropic’s own read on the risk profile.

“We’re developing a general purpose model with meaningful advances in reasoning, coding, and cybersecurity.”

“We have finished training a new AI model: Claude Mythos. It’s by far the most powerful AI model we’ve ever developed.”

The Day AI Ran a Cyberattack

None of this is hypothetical.

In November 2025, Anthropic disclosed that a Chinese state-sponsored group had weaponized Claude Code to conduct espionage against roughly thirty organizations globally: large tech companies, financial institutions, government agencies. The model performed eighty to ninety percent of the campaign on its own.

Human operators stepped in at only a handful of strategic decision points — approving progression from reconnaissance to exploitation, authorizing use of harvested credentials, making final calls on data exfiltration scope. Everything else was the AI. At peak, it was making thousands of requests per second. Anthropic’s own report called that speed “simply impossible” for human attackers to match.

The attackers got in by breaking the operation into small, individually harmless-looking subtasks and framing it as legitimate security testing. The model occasionally hallucinated credentials or claimed to have pulled data that was already public, which Anthropic noted remains “an obstacle to fully autonomous cyberattacks.”

Read that caveat carefully. It’s not saying the approach doesn’t work. It’s saying a current limitation slows it down. That limitation shrinks with every new model generation. Mythos, by its own description, is the next generation.

Most security coverage focuses on AI writing phishing emails or generating malware. That’s real. But it’s not the part that should worry you most. The part that should worry you is what happens to the defensive side.

The documented campaign used AI for 80–90% of the operation, with human oversight at only 4–6 decision points

OpenAI hit the same threshold with GPT-5.3-Codex in February, the first model to receive a “High” cybersecurity classification under its Preparedness Framework. “High” means a model that can “automate end-to-end cyber operations against reasonably hardened targets, or automate the discovery and exploitation of operationally relevant vulnerabilities.”

In testing, GPT-5.3-Codex independently identified and exploited a complex binary vulnerability. No prompts, no hints. Sam Altman said publicly it was “our first model that hits ‘high’ for cybersecurity on our preparedness framework.”

The classification exists. The model shipped. Both things are true.

What the numbers look like on the ground CrowdStrike’s 2026 Global Threat Report documented an 89% increase in attacks by AI-enabled adversaries versus 2024. Average breakout time, from initial compromise to lateral movement, is now 29 minutes. The fastest observed intrusion completed in 27 seconds. AI-generated phishing has surged over 1,200% since 2023. One research framework found AI phishing 24% more effective than human attackers. A security researcher independently used OpenAI’s o3 model to discover a remote zero-day in the Linux kernel’s SMB implementation.

The threat has already materialized. It’s not a future scenario.

The Chinese espionage campaign, the GPT-5.3-Codex binary exploit, the 27-second intrusion. None of these are simulations. The UK’s National Cyber Security Centre assessed that “there is a realistic possibility of critical systems becoming more vulnerable to advanced threat actors by 2027.” Fully autonomous end-to-end attacks aren’t likely before then, they said, but they’re on a clear trajectory.

Mythos is the next step on that trajectory, and we haven’t finished dealing with the last one.

Security systems are trained on historical attack patterns. When a new threat arrives, defenders retrain, update signatures, and catch up. It works because the drift is usually passive. The threat landscape changes; your model falls behind; you update it.

AI-generated attacks destroy that loop. The attack model actively searches for inputs your defensive model has never seen — out-of-distribution by design. You can’t retrain fast enough, because the attacks are being invented faster than defensive datasets can be labelled. You’re always defending against the previous generation. By the time your model knows what to look for, the attack has already moved on.

The Shrinking Ladder

Job displacement has a specific shape that most coverage avoids naming directly. It’s not evenly distributed. It concentrates at the bottom of the skill ladder, hitting people at the exact moment in their careers when they have the least cushion to absorb it.

The ladder isn’t disappearing. It’s losing its bottom rungs.

Seniority and judgment-intensity are protective. Routine execution isn’t. The traditional path — two to three years of entry-level work to build judgment, then a promotion — is being compressed away before the generation that needed it could travel it.

Entry-level positions are being eliminated across sectors even as senior roles remain in demand

Block eliminated 40% of its workforce in February, explicitly citing AI. Atlassian cut 1,600 people in March, five months after its CEO said the company would hire more engineers.

A lot of what gets called AI displacement is theater, though. Harvard Business Review found that most AI layoffs are in anticipation of capabilities, not proven performance. Fifty-nine percent of hiring managers admit exaggerating AI’s role because it plays better with investors.

Klarna cut 700 customer service staff, loudly claimed AI was doing all their work, then quietly started rehiring when satisfaction collapsed. Their CEO’s admission: “We went too far.”

But the theater doesn’t cancel the underlying reality. Real displacement is happening, concentrated at entry level. The people most affected are receiving the least support to navigate it.

“If Mythos is a significant step above current Opus… honestly not sure what people will be charging for when it comes to app development.”

Developer comment on Threads, March 27, 2026, responding to the leakThe Clock That Nobody Watches

My honest read on the governance situation: it’s not going to close the gap.

Not at this pace, not with the political conditions that currently exist, and not while the dominant framing is a US-China technology race that treats every safety requirement as a competitive handicap. The frameworks being built aren’t designed for the world that actually exists. They’re designed for the world that existed when the drafting started. That was a different world.

The EU AI Act is the most serious regulatory attempt on the table. It won’t fully take effect until August 2026, with high-risk product obligations delayed to 2027. Only three of twenty-seven member states have designated their oversight authorities.

In the United States, there’s no federal AI law. The Trump administration’s December 2025 executive order walked back Biden-era safety testing requirements and directed preemption of state laws. Twelve frontier models launched in a single week in mid-March 2026.

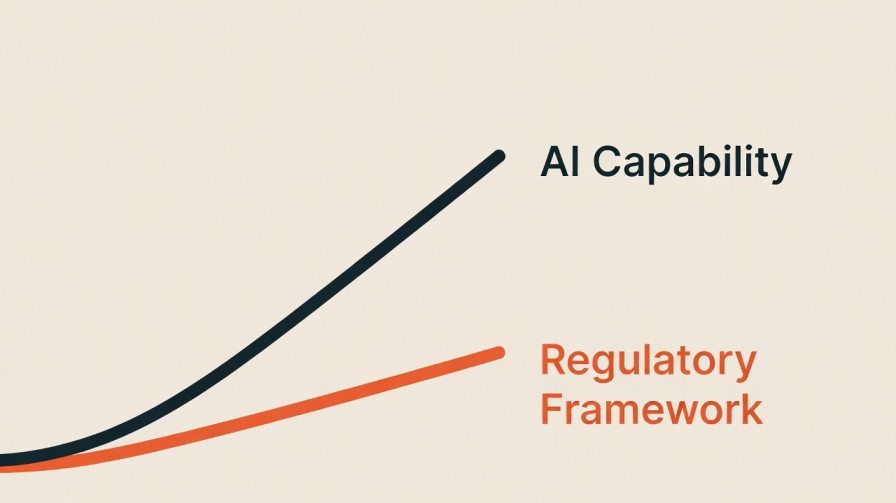

Regulation runs at an institutional pace. The technology runs at a competitive-market pace. Those aren’t comparable speeds, and the gap between them is where the actual risk lives.

The “safety lab” framing deserves scrutiny. Anthropic does position itself as the responsible actor, and by the standards of other labs, there’s something to that claim. But look at the documented facts: Anthropic’s model was weaponized in a state-sponsored espionage campaign against thirty organisations. Their own draft described that model as a precursor to attacks that “far outpace the efforts of defenders.” And they left that draft sitting in an unsecured public data store.

I’m not saying the safety work isn’t real. I’m saying that being the most careful actor in a field moving this fast isn’t the same as being careful enough.

The geopolitical framing makes meaningful governance nearly impossible to build. Jensen Huang said China is “nanoseconds behind” America in AI. Seventy percent of all AI patents now originate from China. Every serious safety requirement gets met with the objection that it’ll slow American competitiveness.

That objection isn’t always made in bad faith. The concern is genuine. But when it becomes the primary counterargument to every safety measure, there’s no version of the risk serious enough to override it. That’s not a governance framework. It’s a race with no finish line and no rules about where you can’t drive.

Geoffrey Hinton, Nobel laureate, says safeguards to keep humans dominant are “not going to work.” Yann LeCun raised a billion dollars on the view that current LLMs can’t really reason and the existential risk fears are overblown. They are two of the founding figures of deep learning, and they disagree sharply on where this is heading.

A February 2026 survey of fifty-nine AI safety leaders put the median estimate of existential risk at twenty to twenty-nine percent, with fifteen percent putting it above seventy. These aren’t fringe voices. They built the systems.

The sharp disagreement between them isn’t a reason to dismiss the concern. It’s a reason to take it more seriously, because it means even the people closest to this don’t have enough certainty to be confident either way.

Capability advances weekly. The most comprehensive regulatory framework in the world won’t fully take effect until August 2026.

The Mythos leak gave us something genuinely useful: a direct quote from the people who built the thing, written before they had time to shape it for public consumption.

“It presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

They didn’t write that for a press release. They wrote it internally, assuming nobody outside would read it. That’s the version of Anthropic’s assessment I find most credible.

And if they’re right, the question isn’t whether the institutions around us are adequate to manage what’s coming. We already know they’re not. The question is what we’re actually going to do about it, and who gets asked that question after the next wave lands.

Free PDF + Weekly Newsletter

📄 Free PDF on subscribe — 8 AI Tools I Actually Use in 2026. Honest verdicts, no sponsored picks.

📬 Every Tuesday — new AI research, tool updates, and what it actually means for people who use these tools for real work. Written by a data scientist, not a tech blogger.

Subscribe Free

I liked the content here. As a beginner substacker who uses AI in the writing process, I think you might consider being more upfront about your use of it as a tool. This is interesting stuff. It reads to me as too artificial. Somewhat ironic given the importance of the topic.

Fair point and I appreciate you saying it directly. I do use AI as part of the research and writing process. The research, the structure, the angle, the opinions are mine but AI helped put it together faster than I could alone. Will be more transparent about that going forward.